Earlier this year, a side project led me to research how to run WordPress in Google Cloud, with the objective of minimizing hosting costs. I went down a rabbit hole with Cloud Run, and came out with a platform that runs (almost) entirely on the GCP Free Tier. This site was launched as a way to make direct observations and improvements. It worked so well, I migrated two personal sites onto the same platform and ditched my old provider.

The end result – my hosting costs dropped to almost $0, at pennies a month per site. And I picked up a few new skills along the way.

This article provides a summary of the modernization journey that I took, the technical solution in its current form, and my initial results on cost.

BACKGROUND

First things first: WordPress. It’s an open source CMS (content management system) that powers 39% of the web, as of 2020. It’s been around since 2003, and an entire ecosystem has developed around it.

WordPress is accessible enough that an average Joe can use it for a personal blog, but flexible enough that it can be the workhorse for a high-traffic commercial website. The underlying technology is a LAMP stack that needs to be hosted in a place where it can be reliably accessed on a 24×7 basis.

A WordPress Hosting Primer

An understanding of WordPress hosting is necessary to see the challenges that are being addressed. In order for a WordPress-driven website like this one to exist, two components are required: the domain name and site hosting.

Acquiring and managing a domain name is as simple as going to a registrar like GoDaddy or Google Domains (I use and recommend the latter), confirming the availability of the desired domain, buying it, then configuring the DNS records for the hosting provider. Set the domain to auto-renew and enable privacy protection. That’s the beginning and end of this topic.

Site hosting is much more nuanced. Every WordPress site requires resources like disk space (to store the site’s content, media, and database), network bandwidth (to serve the website to end users), and other bells and whistles like site backups and SSL certificate management. These resources have both a financial and technical cost. Hosting providers typically offer tiers of metered usage and add-ons at per-month price points. Some abstract the details away and offer “WordPress as a Service” in a SaaS model.

The most common approaches to host a WordPress site are:

– WordPress.com: A logical starting point for the casual blogger. In just minutes, you can be up and running with your own free blog at wordpress.com, installed and hosted for you. A domain name isn’t even required at this stage, as you’ll get a subdomain (e.g. kevinslifer.wordpress.com). The hosting complexity is as low as it gets – you’re renting a sandboxed WordPress site in a SaaS model. Average price point: $0-$25.

– Managed WordPress Hosting: Providers like WP Engine and SiteGround offer a similar SaaS model, but with more powerful features. The hosting complexity is still very low, but these providers have value-adds to optimize the WordPress experience at a premium price point. This is where most professional WordPress sites managed by non-technical teams live. It’s the sweet spot. Average price point: $5-$60.

– Shared Web Hosting or Virtual Private Server (VPS): Relative to the previous two options, generic web hosting goes from “renting a WordPress site” to “renting a server to run a WordPress site on”. You’ll get a server (either shared or dedicated) and cPanel as an interface to manage it, which might come with a one-click option to install WordPress. From there, you’re in charge of almost everything else, with a greater degree of control over the technology stack. Average price point: $10-$50.

– Roll Your Own: Finally, you can install WordPress anywhere you’d like, anyhow you’d like – for example, on your home computer, or on a Raspberry Pi (though neither is practical for the long haul). This is the most hands-on option, but the level of control you will have over every aspect of the setup is high. Average price point: $0-$∞

Prior to this project, I was a customer of A Small Orange in a Shared Web Hosting model. The new approach that I’ve adopted is a variation of Roll Your Own, with the WordPress stack distributed across several Google Cloud services.

My Experience With WordPress

In 2015, my wife and I quit our jobs to travel around the world. I wanted to create a website to capture the memories of this experience, and decided that teaching myself how to self-host WordPress would give me something interesting to talk about in job interviews when I returned home and needed to get back to work. I bought a shared hosting package from A Small Orange, the domain whereschevin.com, and within minutes was up to my neck in things that I didn’t understand.

My background is in software engineering, not systems administration. So even though I was able to perform a one-click install of WordPress through cPanel in less than five minutes, it took me about five days to get into cPanel and to that one-click button. As soon as I saw my live site, I wanted to delete the “Proudly powered by WordPress” link at the bottom of every page. Generating an SSH key to log into the server and edit a PHP file with VIM without breaking the site… another five days. It’s a good thing I didn’t have a day job, because learning my way around a LAMP stack became a full time endeavor. The learning curve leveled off after being hands-on with most facets of the WordPress stack over the roughly ten months that we traveled.

Fast forward five years to mid-2020. Most of the world is handing their pictures over to Instagram and calling it a day. I’m still on A Small Orange, running a private baby picture WordPress site for friends and family at littlechevin.com. whereschevin.com lives on as a memory of what once was. I’m pretty comfortable with the shared hosting model and know my way around WordPress. I’ve even hacked together a theme called InstaChevin and hand-rolled undocumented configuration changes to my server to optimize my sites. I’ve accidentally taken the sites down for days at a time while learning how to be a good webmaster.

During this five year period, the public cloud blew up and transformed the entire IT landscape. Code can now be shipped and run in Docker containers, and serverless is hot. Having worked at the forefront of this industry (specifically with Google Cloud) for over two years, I knew there was a more modern way to host a WordPress site. I just didn’t have a reason to take a serious look into it… until I did.

THE OBJECTIVE

This summer, I was approached by some close friends who were opening a brewery to help them out with a website. Wanting to stay in my comfort zone, I recommended WordPress, but suggested that we evaluate hosting options to find the best provider for them.

I gravitated towards Google Cloud because of the overlap with my professional skills. Kinsta came up immediately, and with great reviews – but it was hard to justify the $30/month entry point. To be clear, $30/month to host a single site is above average, but not excessive. The problem I wanted to solve for was that this site would have a minimal number of visitors until the brewery opened (and even then, most traffic could end up going to social media channels). If there are zero visitors in a given month, the cost should approach zero – and if opening month is busy, then some cost would be expected. The ideal provider would offer pricing elasticity to support this scenario. This doesn’t really exist in the WordPress hosting marketplace, but it’s a predominate feature of public cloud services.

Google Cloud has a WordPress landing page that outlines three compute paradigms for running WordPress. These are factual, but not that interesting or cost-effective relative to my objective. What was missing from that page was Cloud Run, a newer, “scale to zero”, serverless runtime for containerized applications. “Scale to zero” was what I had in mind.

The GCP Free Tier is an overlooked and underrated feature of Google Cloud where many products offer a monthly quota of complimentary usage. Cloud Run has a very generous free tier quota of 2 million requests, 360,000 GB-seconds of memory, and 180,000 vCPU seconds of compute time – with pricing charged in 100 millisecond increments. Projecting usage in 100 millisecond increments would be a futile exercise, but the bottom line was that if WordPress could work on Cloud Run, a site with modest traffic would stay well within that quota.

There would be a few other problems to solve. The idea was half baked, but the objective was clear – drive cost towards $0, or go with a Managed WordPress Hosting provider and move on.

THE SOLUTION

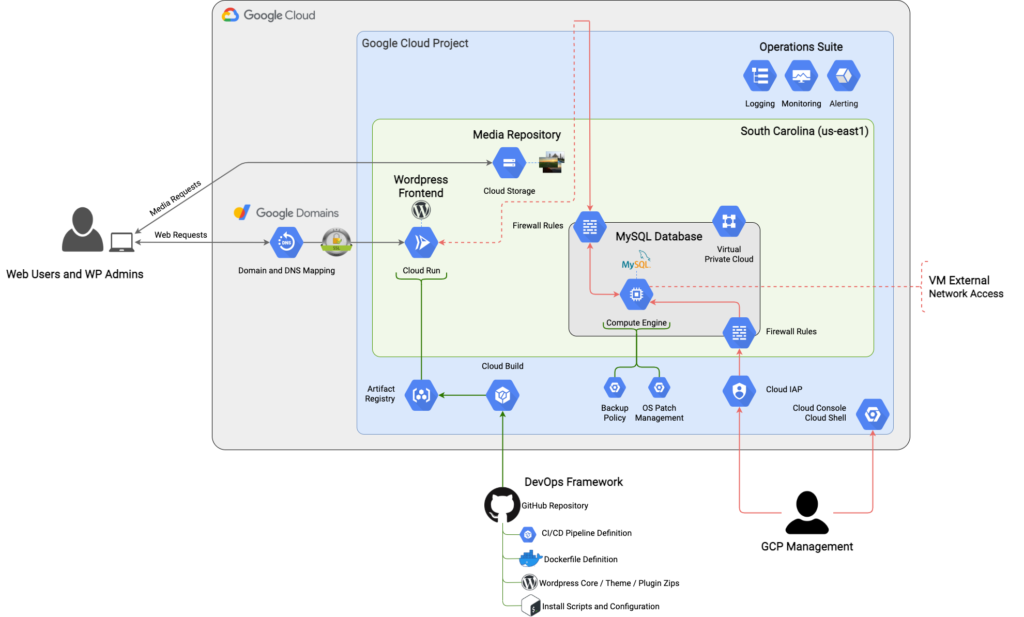

The diagram below represents the current state of the platform:

The WordPress stack translates into three components that separate compute, relational data, and media storage: the WordPress Frontend, the MySQL Database, and the Media Repository.

WordPress Frontend

The main prerequisite to run WordPress in a “scale to zero” model on Cloud Run is that it has to be packaged as a Docker container. The good news is that this has already been done; the less good news is that this specific container won’t work in Cloud Run off the shelf. It’s still a good starting point, and is the base image in my Dockerfile.

A very important difference between Cloud Run and other Docker runtime environments (like Docker Compose, Docker Swarm, and even Kubernetes) is that Cloud Run offers no native persistent filesystem between container lifecycles. While WordPress externalizes most site content into a MySQL database, it also writes to the local server filesystem, and the two most problematic examples of this are to install and perform in-place automatic updates to site plugins and themes.

The official Docker image solves for this in a containerized world through the use of a volume (which maps to a local filesystem on the Docker runtime). That luxury doesn’t exist in Cloud Run; the workaround is to engineer a CI/CD pipeline that bakes every site-specific plugin, theme, and code adjustment up-front into an immutable image. The image represents a point-in-time snapshot of the site’s frontend, capable of being repeatedly launched and destroyed without any impact to the site or user experience.

Building automation to reliably bake an immutable image was the most difficult and time consuming (but also rewarding) part of this project. The instructions in my Dockerfile perform the following:

– Inject configuration changes into the Apache Web Server and PHP runtime

– Delete the contents of the volume that’s mounted by the official image, and replace it with the WordPress core, themes, and plugins that exist in the site’s GitHub repo

– Inject WordPress configuration through both local secrets files in the container (for a small set of sensitive configurations) and environment variables (for the rest)

A “five day issue” that surfaced here was a 15 second cold start. A cold start is the amount of time that’s required for a new container to launch and complete its initialization activities before it can process requests. Cold start time manifests as a delay to the end user, so in this case when I loaded my WordPress website and a new container had to launch to serve it, I’d have to wait out the 15 second cold start time for the request to be processed. My expectations for load times were reasonable; 15 seconds wasn’t reasonable.

Reverse engineering the WordPress Dockerfile led to the underlying issue: the entrypoint script checks for an existing WordPress install in the volume it mounts at /var/www/html, and if it doesn’t exist, copies the WordPress install that comes with the image from /usr/src/wordpress into the volume. The entrypoint script is executed when each container is launched. This makes sense for Docker runtimes that have persistent volumes. In the Cloud Run model, not so much. The WordPress install just needs to exist in /var/www/html. The problem was that the upstream image declared this directory as a volume, preventing me from copying files into it from inside the image. It’s also not possible to unmount a volume that was declared by an upstream image.

Docker does support copying files from outside the image to a volume mount directory, so what ended up working was building a full WordPress Frontend (core + themes + plugins + customizations) outside of the image in the CI/CD pipeline, then copying it into the volume mount on the image at /var/www/html. Does this trick Docker? I’m not sure, and would like to someday understand why this is allowed. Unfortunately, it requires having the WordPress zip distributable available outside of the Docker image, which adds some overhead to update process for the WordPress core. But cold starts are now trivial, which is more important.

MySQL Database

This part is quite simple. WordPress needs a relational database to store the site’s content, and MySQL is the officially supported platform. Google Cloud offers fully-managed MySQL databases through its Cloud SQL service. Unfortunately, the lowest price point is $7.67/month (not counting disk space). That makes this a non-starter.

The most logical alternative is to run MySQL on a virtual machine. Incidentally, Google Cloud’s free tier includes full-time usage of an e2-micro instance. An e2-micro is a tiny virtual Linux server with 1 GB of memory and 0.25 vCPU (that occasionally bursts to 2 full cores). The free tier also allows for 30 GB of disk space, 5 GB of snapshot storage (for backups), and 1 GB of network egress. Is it crazy to run a database on a server with such minuscule specs? Maybe… but for a low-volume site, maybe not.

MySQL isn’t prescriptive on minimum resource requirements, because it will depend on the database size and transactional volume that need to be supported. To squeeze as much performance out of this tiny VM as possible, the largest persistent disk allowed within the free tier (30 GB) is provisioned to maximize the IOPS budget, then 1 GB of that disk is allocated as a swap file to relieve memory pressure. The fractional CPU is still a bottleneck, but the whole platform stood up to a respectable amount of Apache Bench load testing without falling over. It’s empirically been more than sufficient.

Automated daily snapshots of the persistent disk are configured, with a 3 day retention policy. This stays within the 5 GB quota, and eliminates the need for a WordPress plugin to perform site backups.

The downside of having a VM is that it requires care and feeding in the form of OS patching and MySQL updates.

Media Repository

Another simple problem to solve is that WordPress needs a place to store user-uploaded media. The default behavior is to save it locally on the server. The idea of offloading media to a public cloud or CDN provider’s storage where it can be cached and served faster isn’t new, and many plugins have been developed to support this.

Two plugins exist to offload media to Google Cloud Storage: the official GCS plugin, and WP-Stateless. The latter seemed to be the better of the two, so that one is in use. The experience is seamless; make activating and configuring the plugin the first two steps after the WordPress install, upload media as you normally would, and it will be hosted and served from storage.googleapis.com.

Hand-Rolled Site Customization

An unavoidable reality of having a WordPress website is that it will eventually require some hand-rolled customization – for example, custom text in a page footer or theme modifications. In a traditional hosting model, this is easy to accomplish. Update the file on the server, and keep track of what you did. If you use a code repo and repeatable deployment practices to accomplish this, you’re in good shape.

There’s no hand-rolling in Cloud Run, where not only is the running container immutable, it’s impossible to access. This forces good DevOps practices.

When creating kevinslifer.com, I adopted a theme that was using an old version of Font Awesome for its social menu icons. The newest version supported Strava, and I wanted this link in my social menu. To make this change, I engineered a step in the CI/CD pipeline to inject the necessary modifications to the theme to upgrade Font Awesome during the build process. This is accomplished through a series of rm, cp, echo >>, and sed statements. It’s technical debt that I’d rather not have, but it works. This concept is generalized into the CI/CD pipeline as a hook to the customizations.sh script.

DevOps Framework

The distributed nature of the platform’s architecture creates a lot of moving parts. The orchestration of these parts is a purpose-built DevOps framework.

100% of the site code and configuration lives a GitHub repo; specifically a private mirror of the generic public repo. Private is emphasized because sensitive WordPress configurations reside in the variables.conf file, and if publicly exposed, reveal enough information for the WordPress database to be accessed.

The code is built and deployed with a pipeline that runs on Cloud Build, Google Cloud’s serverless CI/CD platform. The pipeline has a build trigger configured to the private GitHub repo, such that when a change is checked in, the build process will be invoked to deploy the change. There isn’t much in the way of automated testing in the pipeline, so some oversight is necessary – at a minimum to check for failures in the build process itself, and to verify that the site loads after the new container is deployed.

Built images are stored in an Artifact Registry repo, then deployed to Cloud Run from there. As a cost management motion, the CI/CD pipeline implements a lifecycle policy that retains a rolling history of the live image + last three deployed images.

What this translates into on a day to day basis is that the most common maintenance activity of updating plugins requires work. Instead of allowing the site to automatically update itself in-place, new plugin zips need to be downloaded and checked into the GitHub repo. From there, the CI/CD pipeline will create a new image and deploy it to Cloud Run. The upside to this process is that the images serve as deployable snapshots of the site’s code, so rolling back from a bad plugin update is done in one click. This is a significant benefit over updating in-place, where rolling back is complicated. I’m confident that some form of “self-update” functionality could be implemented on this platform, but it’s a problem for another day.

Cloud Architecture Pros and Cons

Making cost the highest priority (over other things like resilience, performance, and security) created constraints that led to architecture decisions which could be improved. They’re acknowledged here because a few small changes would make this platform adhere to enterprise production cloud architecture practices, but their respective code is outside of the scope of this exercise.

The first is that the platform is geographically consolidated to a single Google Cloud region (South Carolina). A benefit of leveraging a public cloud provider is that it’s easier to build geographically distributed solutions. In this case, due to cost, the opposite exists. This makes the site susceptible to outages if that region were to have issues, but this is on par with most traditional hosting options. This platform could easily become globally distributed by deploying the WordPress Frontend to multiple Cloud Run regions in a Serverless Network Endpoint Group (NEG) behind a Global Load Balancer (which would support edge caching of WordPress Media with Cloud CDN) and moving the MySQL Database into the Cloud SQL service to leverage cross-region replicas.

The second is that connectivity from the WordPress Frontend containers to the MySQL Database is hairpinned to the external IP of the VM that MySQL runs on, creating a (very small) attack surface. In terms of performance, this isn’t problematic. I don’t think the requests fully exit Google’s network, but the communication definitely isn’t internal-only. In terms of security, it’s an imperfect setup. The MySQL VM has a publicly accessible external IP address, however it’s locked down by firewall rules to only MySQL connectivity (on TCP port 3306). How big of a risk is this? A bad actor would need both the IP and credentials to get in. Storing these in a private GitHub repo and using strong credentials are good mitigating actions. That said, there’s still an external IP address that’s freely accepting connections on TCP port 3306. Does this make the MySQL VM susceptible to being port scanned and potentially flooded? Sure. This entire problem can go away by removing the external IP from the MySQL VM and facilitating internal-only connectivity through Serverless VPC Access. This was the original design (and is still commented out in the provisioning code for future reference), but Serverless VPC Access is cost-prohibitive relative to the objective.

There are silver linings to balance out these less than ideal characteristics. Because this platform lives in Google Cloud, it benefits from residing on a fully-owned private backbone that carries over 40% of the internet’s daily traffic. The network is optimized to move traffic efficiently. It’s fast, and while I don’t have before and after measurements, the sites that I migrated in from my shared web host are noticeably more responsive. And the software-defined, hyper-scale nature of the cloud means that the difference between regional today and global tomorrow is just code. That’s powerful.

THE RESULTS

The first few iterations of the platform came in well above $0. Even though Google Cloud pricing is transparent (in the sense that it’s documented), it gets confusing to measure and trace in a live project. Such is life when working with a cloud provider; if a service is being consumed, it will show up on the bill – but determining where the cost is coming from can be a nontrivial exercise. That said, the current iteration of the platform will baseline at the $0 run rate target.

Elastic pricing is a notable deviation from traditional forms of WordPress hosting, where the price is fixed in return for a fixed allocation of resources, regardless of usage. “What happens when I reach my limit?” is a good question to ask (particularly when operating under a network bandwidth or visitor budget), and the behavior varies by provider – some will simply shut you down until the next billing cycle, while others will charge you for the overage in increments after the fact. The same question applies here, and a pricing model that has a greater degree of elasticity will be more sensitive to resource utilization. $0 a month today is great, but am I at risk of my hosting charges spiraling out of control once I exceed the free tier quota?

It’s worth some analysis. The core elastic GCP services in use that will directly scale with end-user traffic are noted below (with their respective pricing models):

– Cloud Run (for the WordPress Frontend): $0.00002400 / vCPU-second (CPU) + $0.00000250 / GiB-second (Memory) + $0.40 / million requests

– Internet Egress (for all response data): $0.19 / GB (Australia) | $0.23 / GB (China) | $0.12 / GB (Everywhere Else)

Based on the above, a site’s traffic patterns and content are the largest determining factors in generating cost. Heavy site traffic will result in greater consumption of both Cloud Run and Internet Egress, and media-rich content will further amplify consumption of the latter. There are several other GCP services in use, but end-user traffic doesn’t directly translate into cost, so they can be optimized independently.

I’m working with a short history of consumption details, so I know that further cost analysis and optimization will be required in the future. High-level metrics and invoice costs for each site for the month of December (the first full month that this platform has been live) are below:

– kevinslifer.com: ~800 visitors, $0.04

– whereschevin.com: ~200 visitors, $0.03

– littlechevin.com: ~100 visitors, $0.02

Those real pennies are coming from two sources: Cloud Run international network egress (e.g. someone outside of North America visits the site – this is outside of the fine print of the free tier) and somewhere in Cloud Storage (I haven’t been able to trace what aspect – my site media should be compliant with the free tier parameters). Otherwise, the resources being consumed are within the free tier.

A dashboard that measures individual service usage against its free tier budget would be insightful. I’d like to be able to answer questions like “What’s the maximum projected monthly visitor count that I could have before crossing above the free tier threshold?” I’m pretty confident that this can be built in the Cloud Operations suite (and paired with alerts that proactively inform me), but for now I’m relying on a less precise approach based on post-consumption billing data.

FINAL THOUGHTS

In my professional life, I’m frequently advocating that the cloud provides an opportunity for transformation. But it requires a time investment to develop new skills, and a commitment to detach from old ways of thinking and embrace change. It’s far easier said than done.

The last six months provided an unexpected opportunity for me to experience this myself, with subject matter that has personal relevance. Having a five year history with WordPress on a pre-cloud hosting model meant that there were skills gaps to close, and mental models to break. I’m still trying to wrap my head around how a MySQL server can survive on just 0.2 vCPU… but I have a much greater appreciation for the free tier of Google Cloud, and the power of taking an automation-first approach in the cloud.

The source for this platform is publicly available on my wordpress-on-gcp-free-tier Github repo, and an instance of a fully-functioning WordPress site can be provisioned in less than an hour.